Google Android

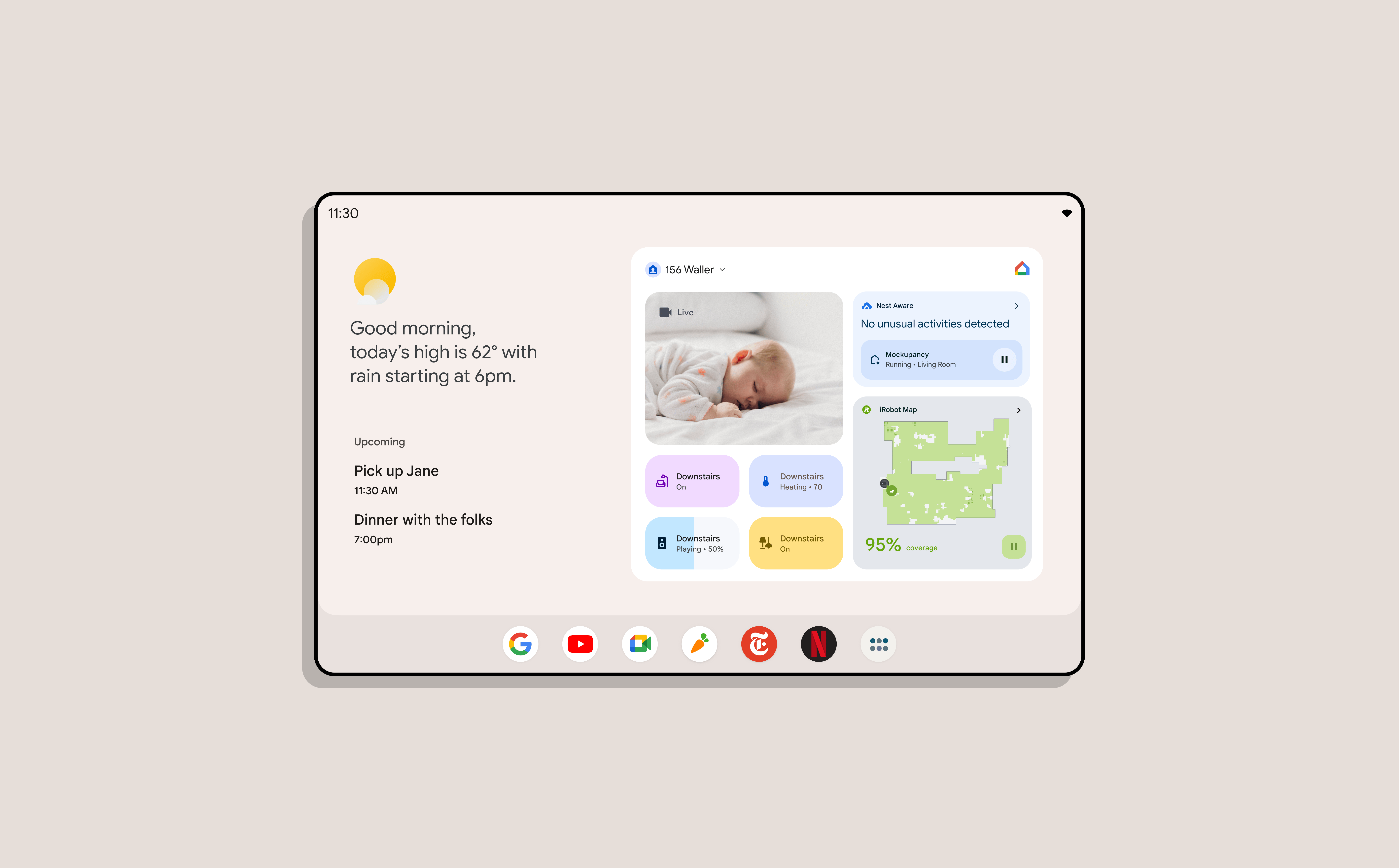

Converging a smart display and a tablet into one operating system that held together at every distance and posture.

Google ran two product offerings that needed to become one — a smart display you spoke to from across the room and a tablet you held in your hands. They shipped on different operating systems with incompatible spatial models, interaction grammars, and information densities. I led the alignment of the OS primitives so a single Android surface could behave correctly across all of them.

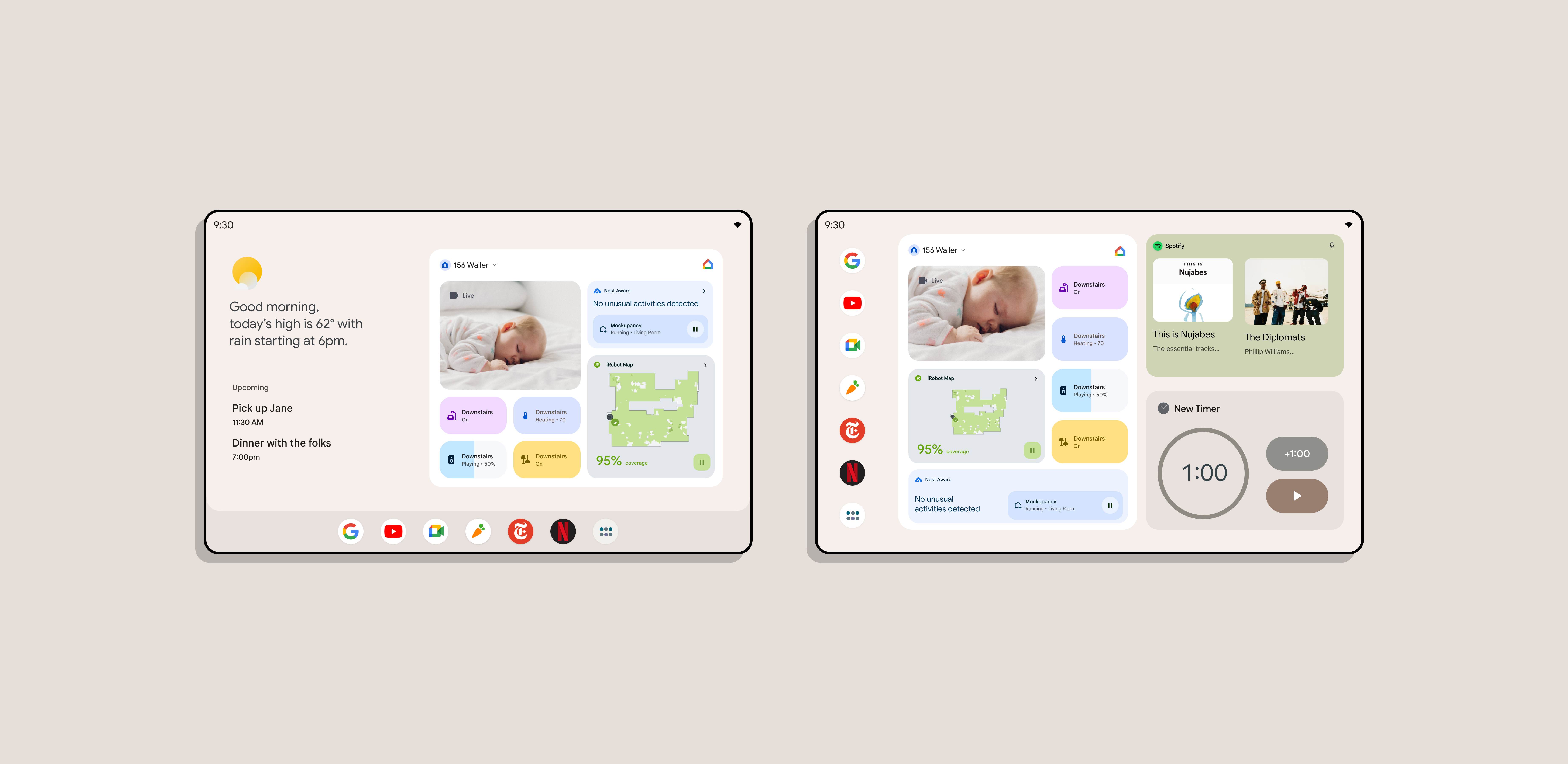

One operating system, three postures — handheld, docked, dream

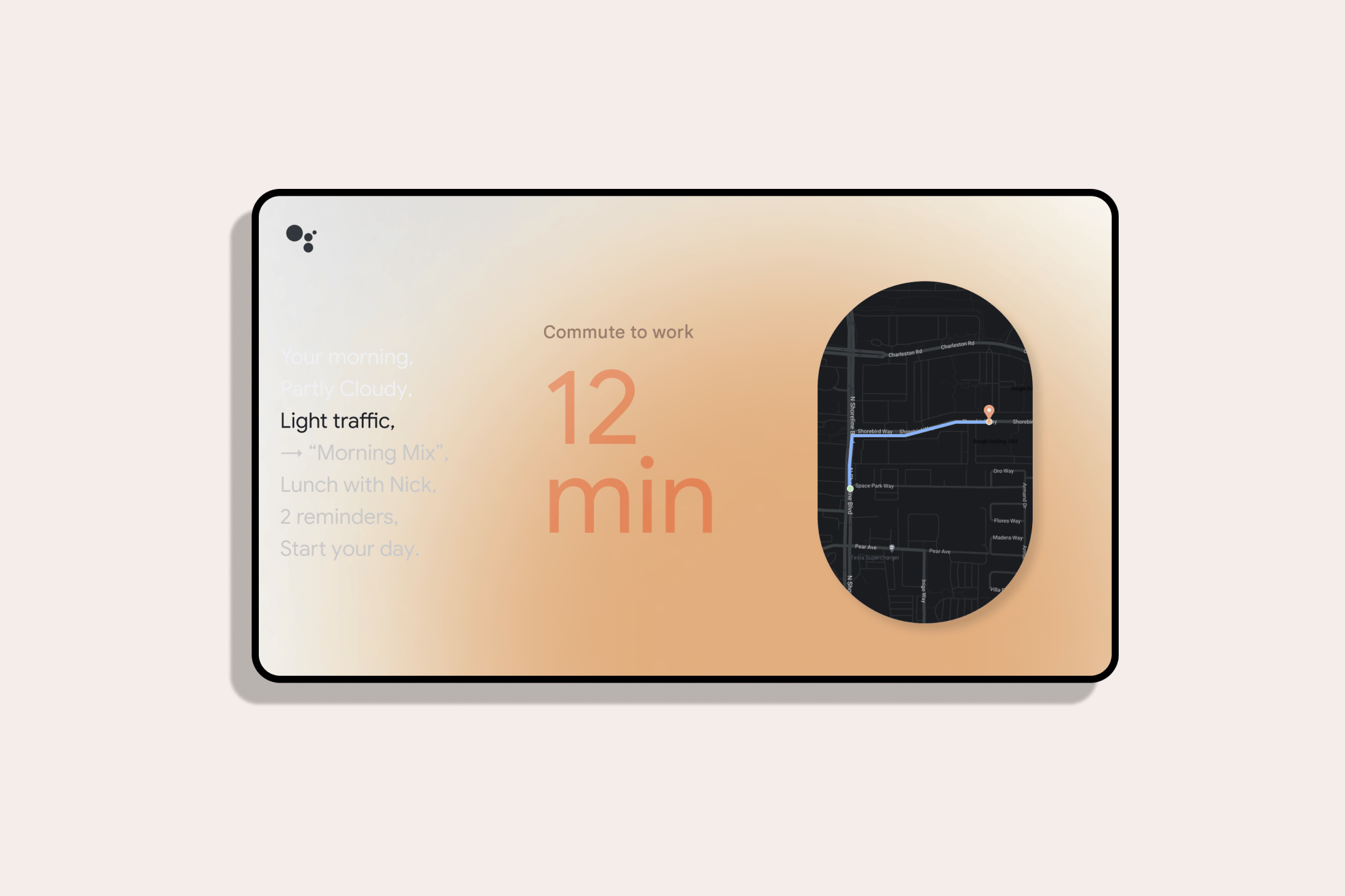

Ten feet — glanceable from across the room, time and ambient state read first

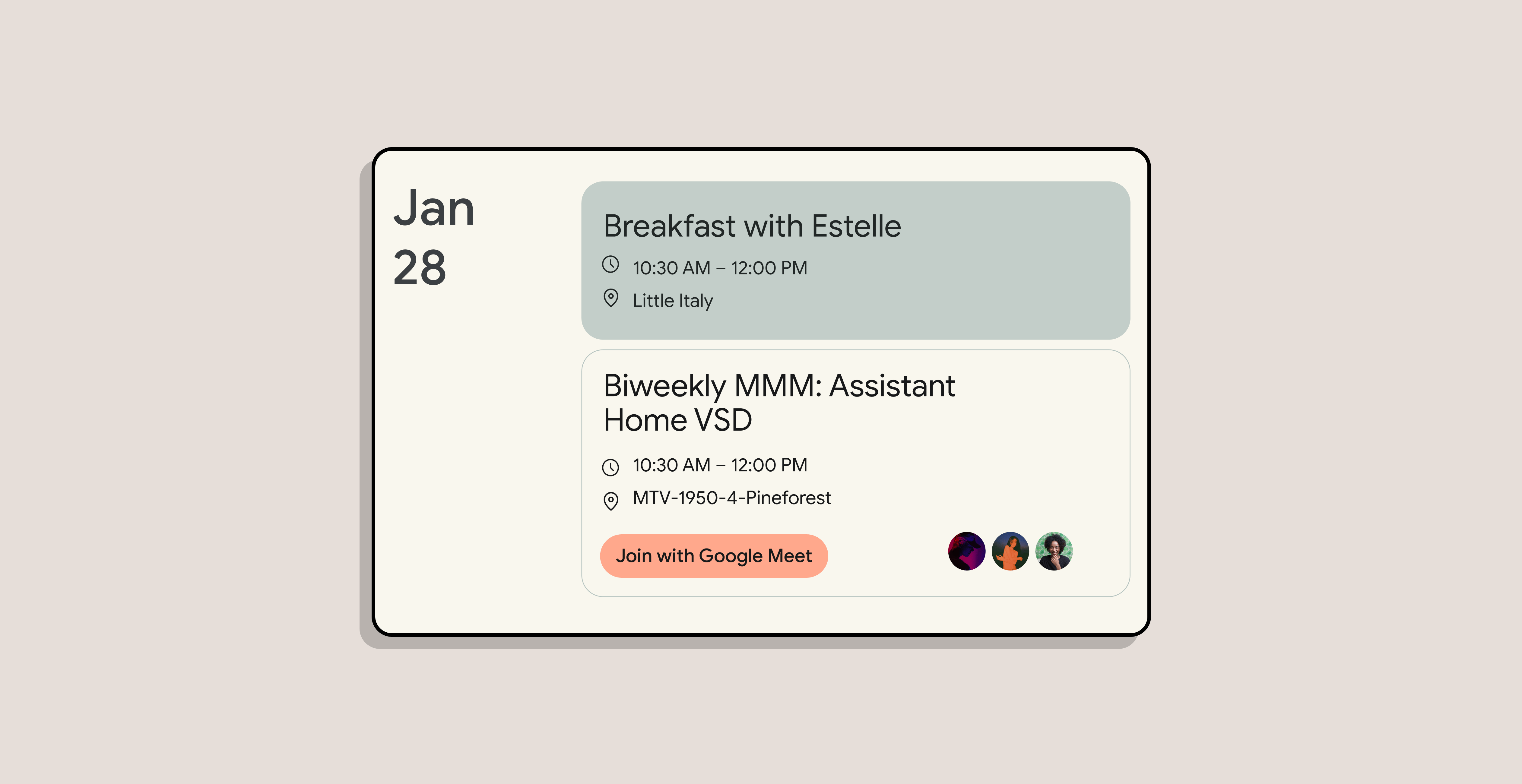

Three feet — counter distance, controls within voice-and-glance

One foot — leaned in, hierarchy deepens, controls expand

Touch — handheld tablet, full Android density, every primitive in reach

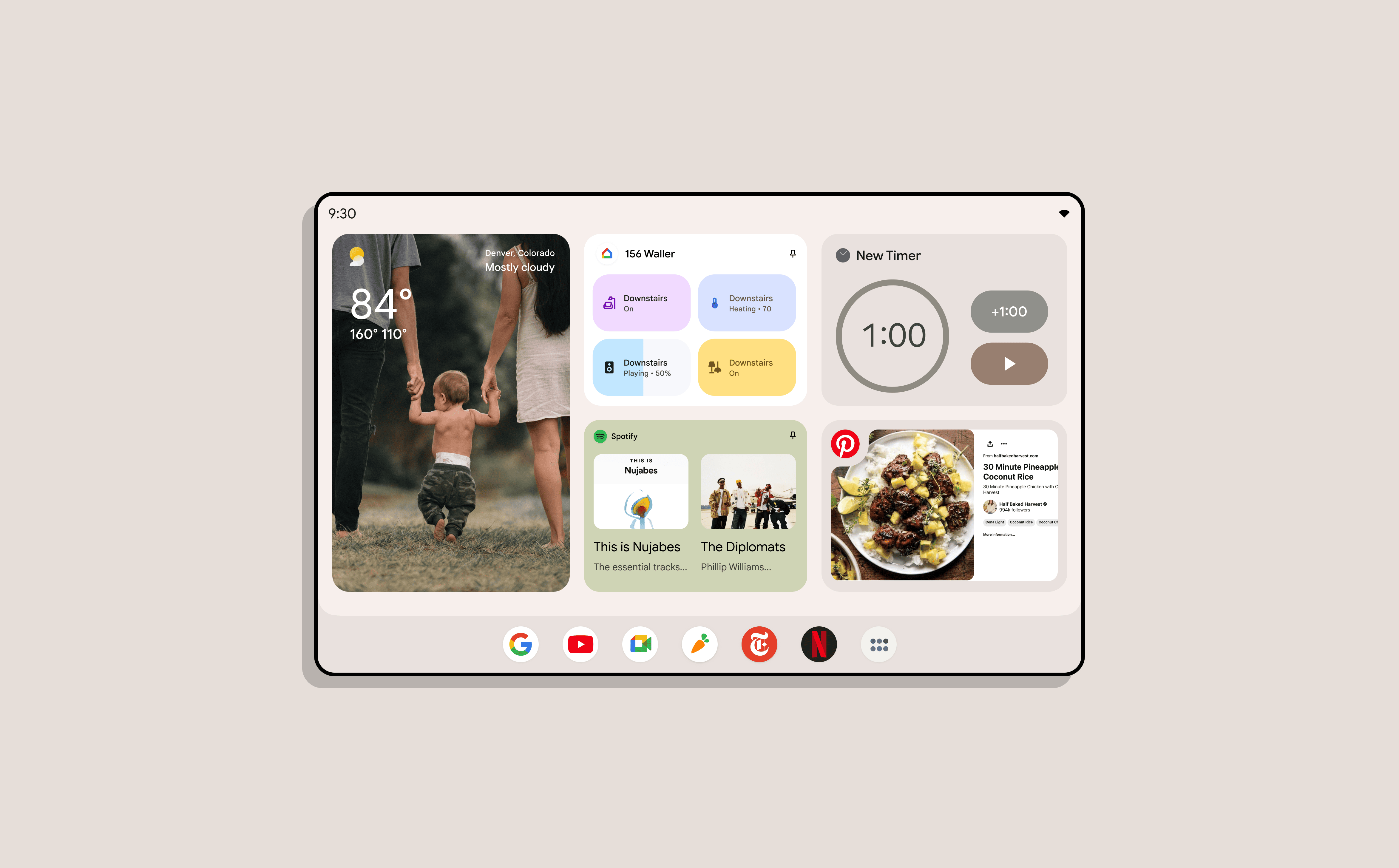

Density at distance

One product was meant to be seen from across the room. The other was meant to be held. The convergence asked one device to be both — a tablet that docked to become a smart display, then undocked back into a tablet — without losing capability and without forcing anyone to re-learn the system.

I co-led the density studies that defined how the same primitive — a card, a button, a clock, a media control — should resolve at each distance. Not four different layouts. One layout traveling a continuous adaptation curve.

Ten feet. Time, weather, music state, the next calendar item. Nothing else. Type at a size that survived the room.

Three feet. Counter distance. Controls cohabit with content. Touch targets land where reach lands.

One foot. Leaned-in reading. Density rises. Hierarchy gains a second layer.

Touch. Full handheld Android. No concession to the smart display's restraint — the tablet got to be a tablet.

The same primitives at four distances, with no visible cut between them.

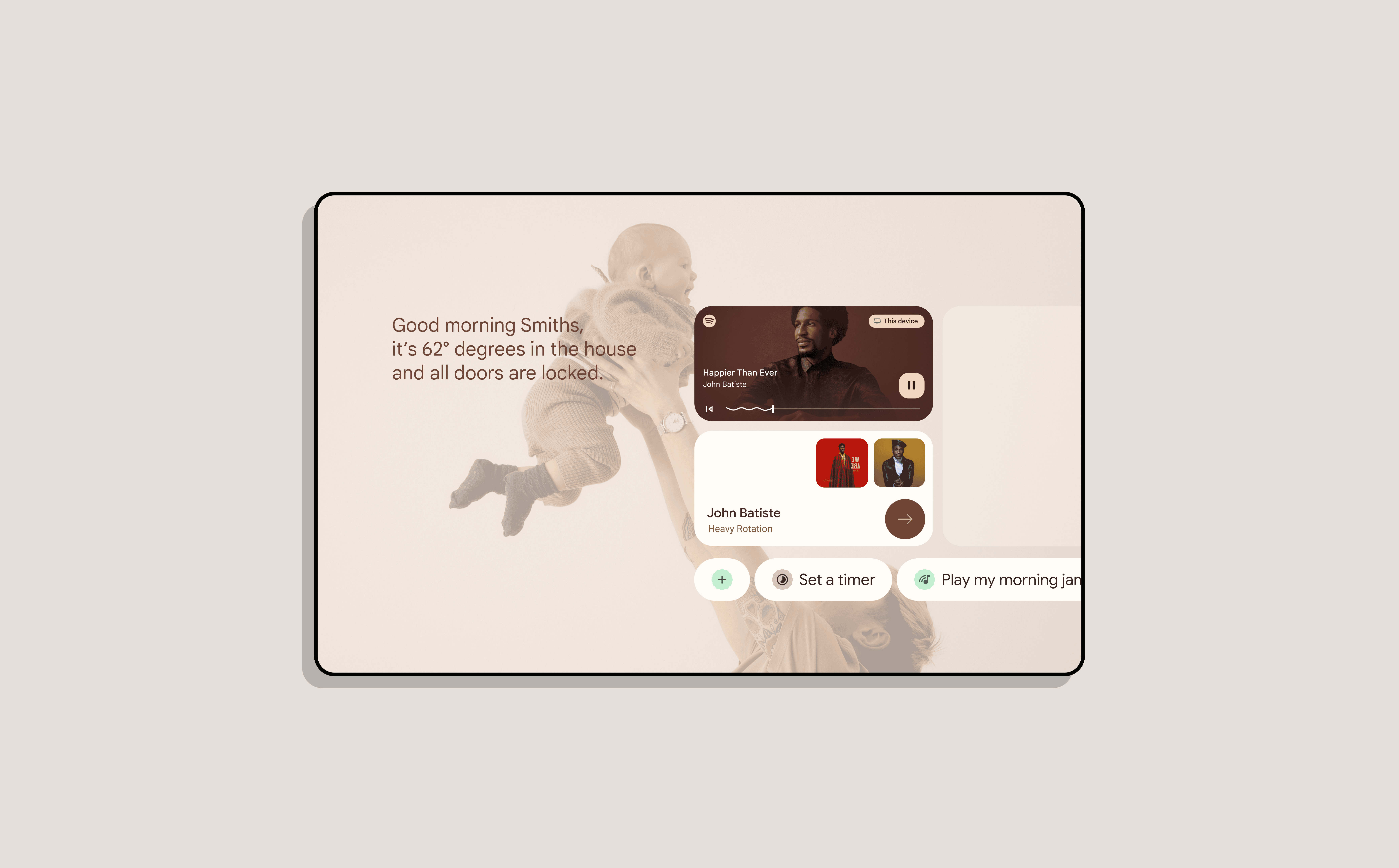

The dream framework

The dream framework is the image of a child walking between two parents, holding a hand in each. The far-field UI has to hold both — the user across the room and the user about to reach in. Most ambient interfaces fail this. The clock grows. The album art swells. By the time you are within reach, the layout has been rewritten and you have lost your place. The interface runs away from you.

I co-led the adaptations to the dream framework so the surface repositioned with intent, not panic. Anchors stayed put. Type scaled along a defined ramp — not per-element guesswork — and affordances expanded toward the hand rather than away from the eye. You could walk up to the device without the interface treating your approach as a layout event.

The same logic carried into widgets. Android widgets had been built for one canvas — the touchable home screen — with no concept of distance. I co-led the adaptations that gave every widget a far-field, mid-field, and touch state, resolved from the same declared content priorities. App teams didn't build three versions; they declared priorities once and let the system resolve layout at every distance the device could occupy.

The unreleased product — a fixed Android tablet that replaced Google's smart display offering entirely

The final form was a tablet that no longer needed two product lines underneath it. One Android surface ran the density continuum end to end — held, docked, or dreamt to from across a room. The smart display as a separate operating system and device family was retired into the converged platform. Patents pending on the spatial model, the density continuum, and the dream-framework approach behavior.