Rippling AI

Defining how intelligence shows up across an enterprise workforce platform serving 500,000+ companies.

Rippling runs payroll, IT, and benefits for over 500,000 companies. The work is designing AI that admins will let touch other people's money, access, and time off — and that they can unwind when it gets something wrong.

Visual highlights of Rippling AIUX

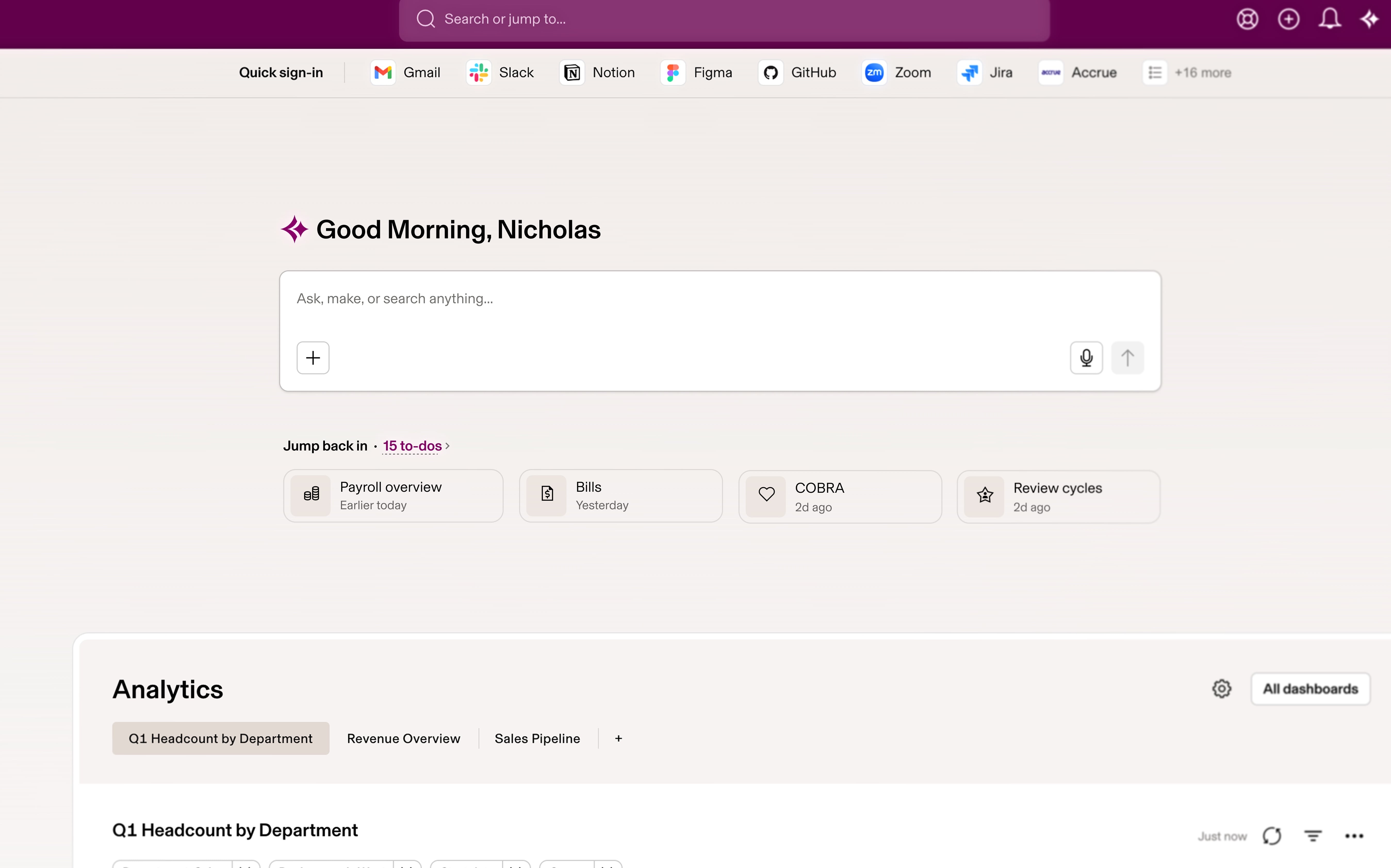

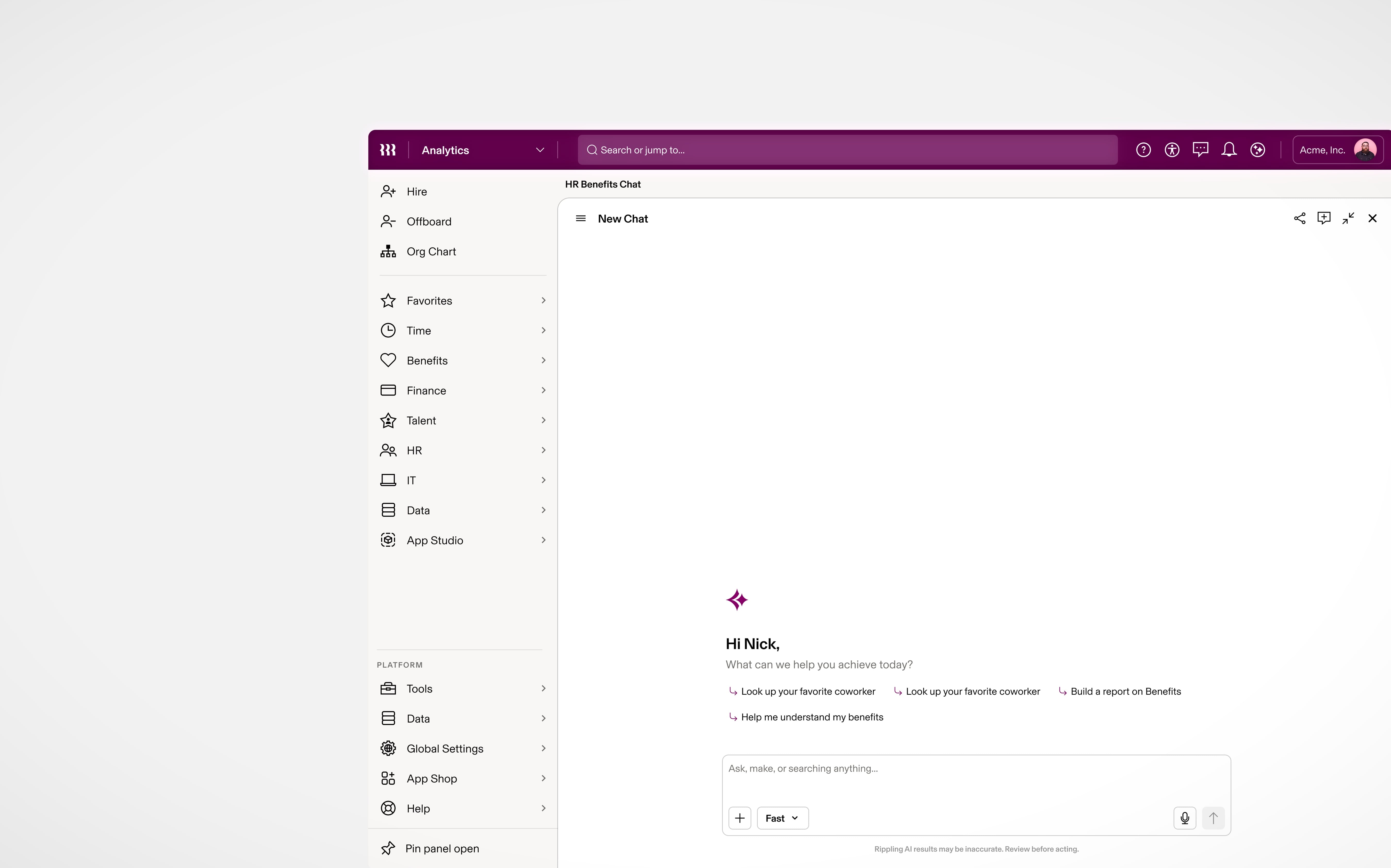

Benefits-native chat shell — scoped prompts before anyone types

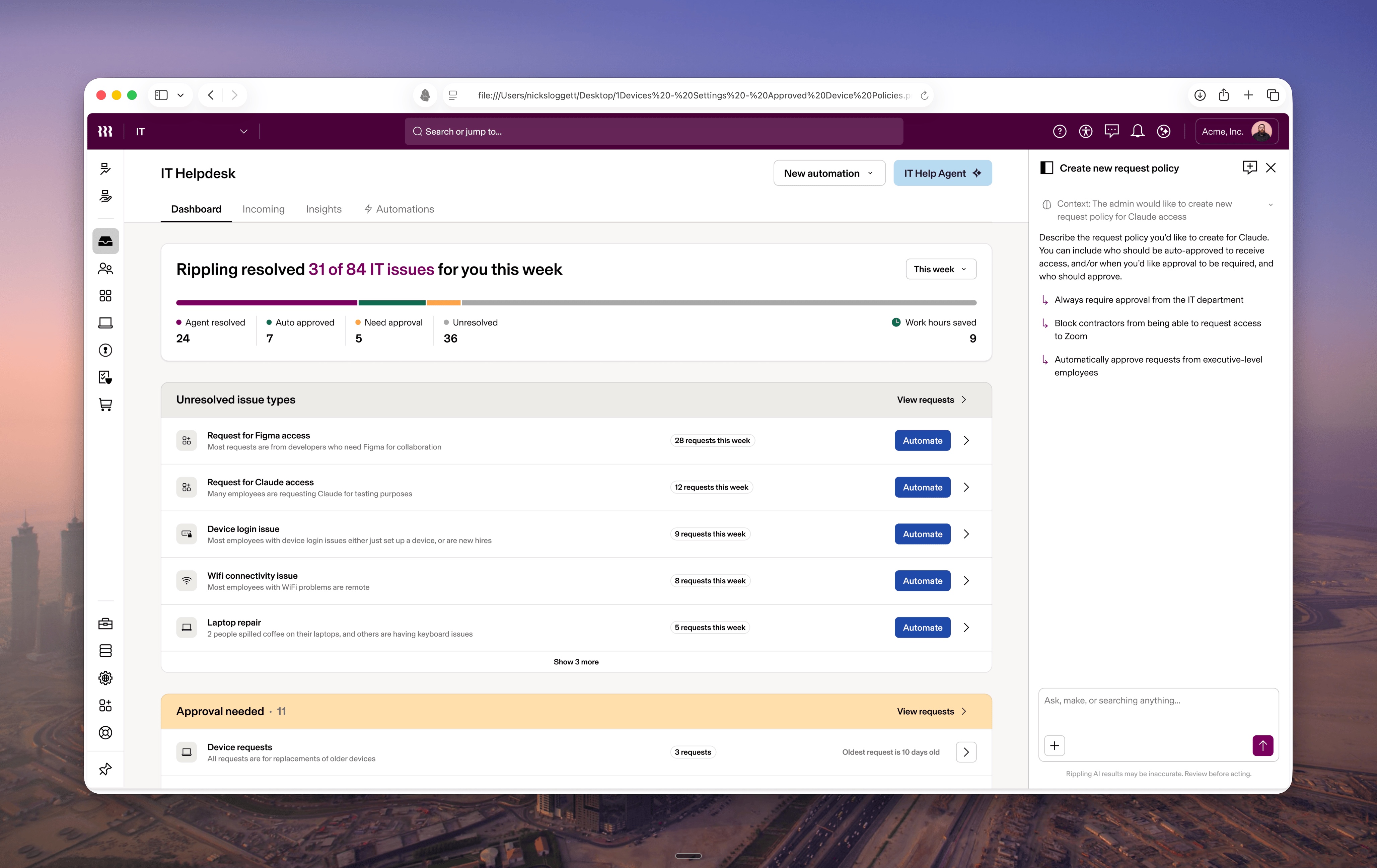

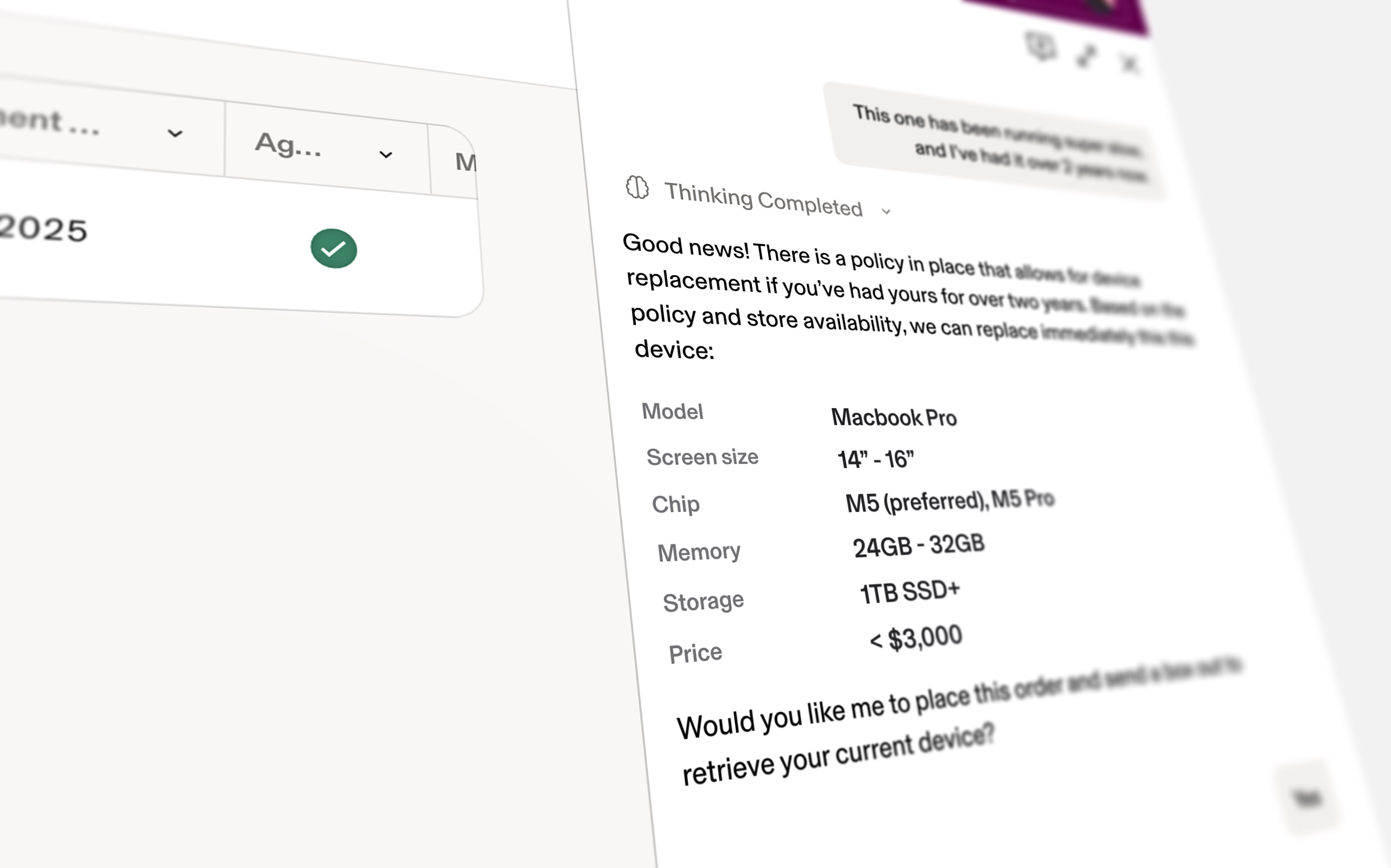

SKU-scoped assistant — device context beside Ask IT; thinking completes into policy-grounded replacement specs

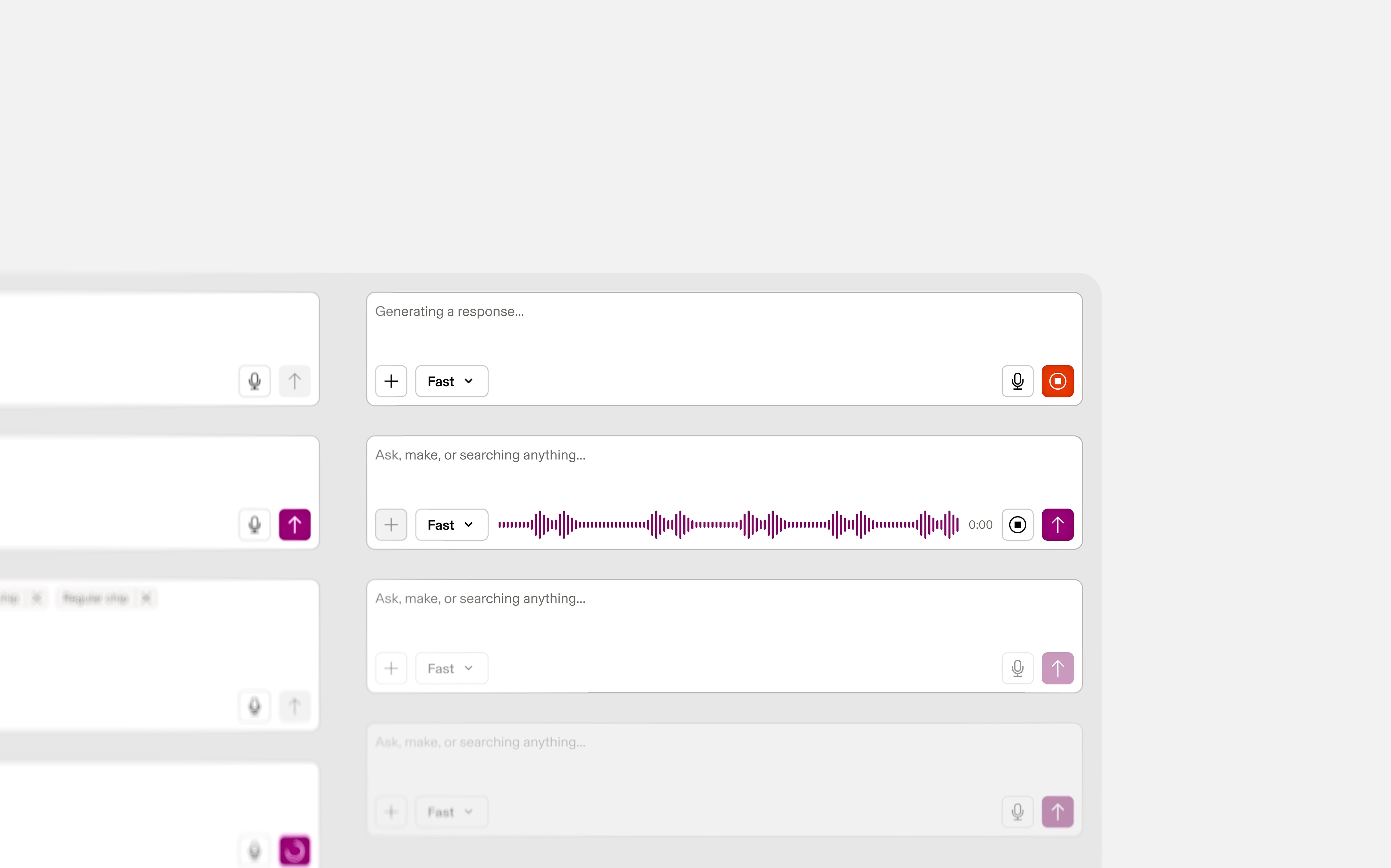

Same input shell across modes — streaming, recording, quiet — plus model-speed control via Fast

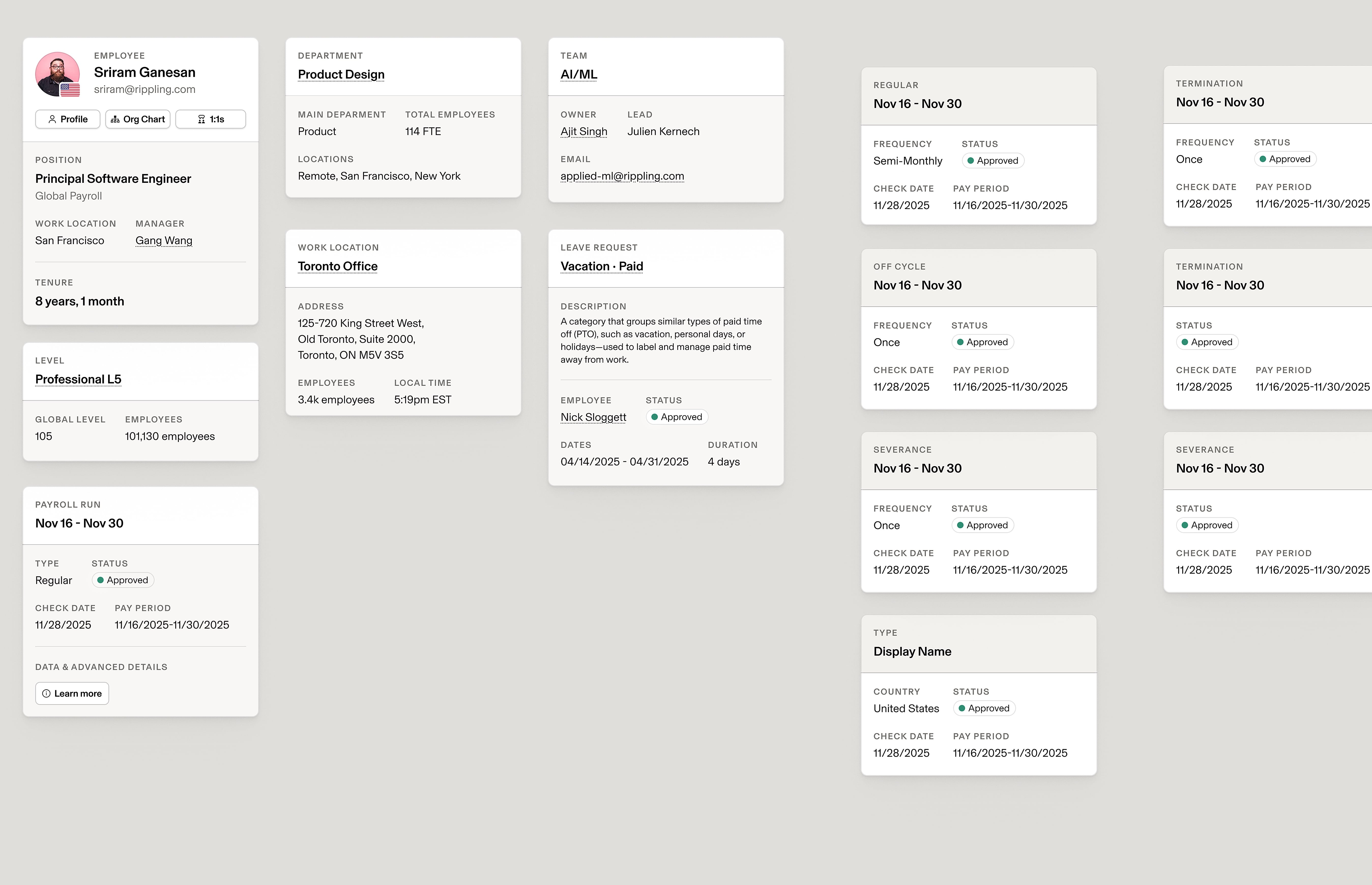

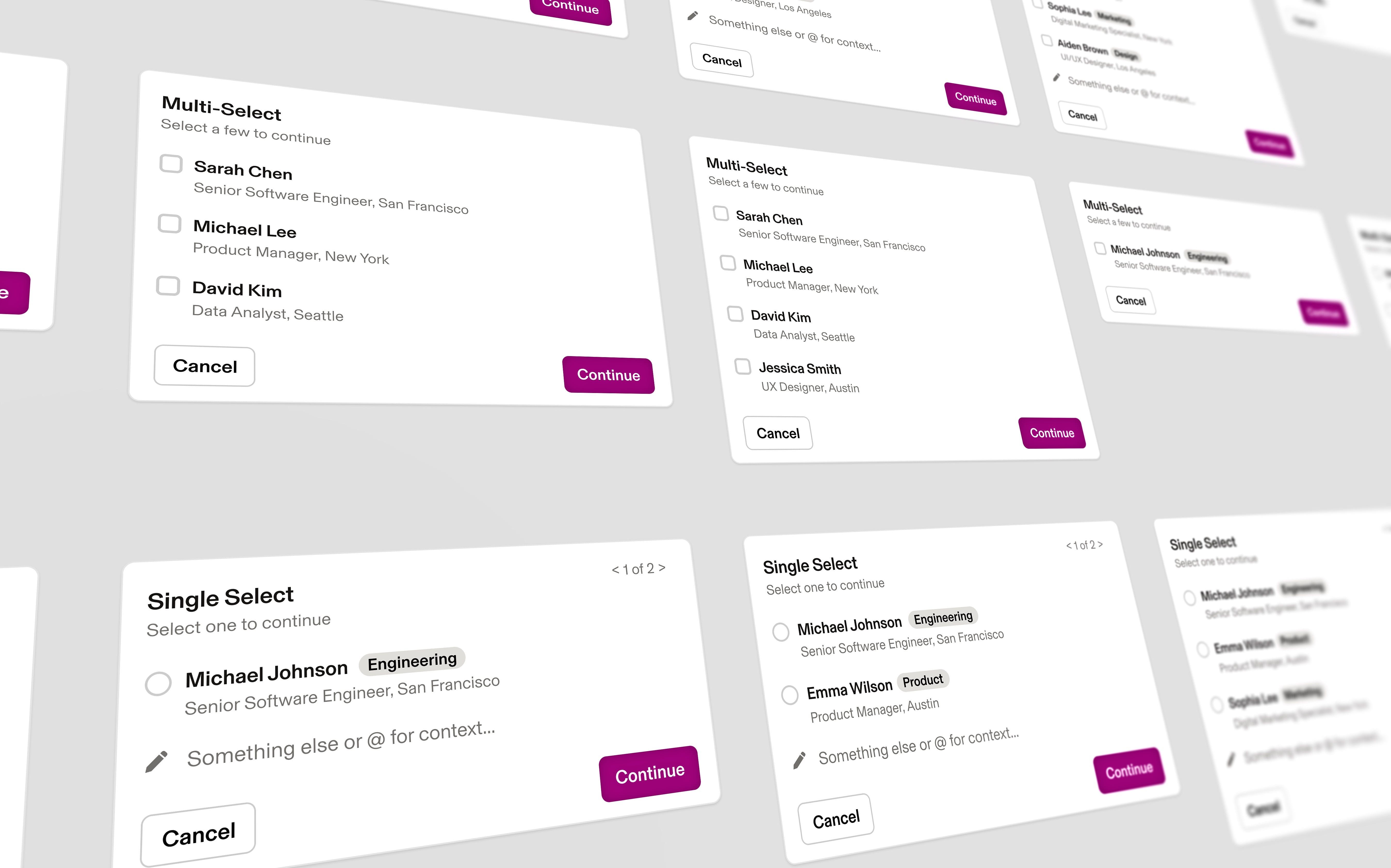

Guardrailed autonomy — human-readable pickers before any irreversible commit when identity or intent is ambiguous

Autonomy Framework

Chat was the surface every part of Rippling had to share. The product spans HR, IT, Finance, Payroll, Spend, Benefits, and Recruiting — and the same chat had to behave differently inside each. I designed it from the ground up.

How each SKU works. Every SKU got a behavior spec tied to its worst case. Payroll's is a wrong number. IT's is wrong access. Recruiting's is a late reply. Confirmation thresholds and trust defaults shifted accordingly.

Composer. One input, three modes: text, voice, and attached context (record, document, screen). The composer shape shifts with what gets attached. Intent inference shows inline but never commits without an explicit send.

Voice mode. No diff to scan, no citation to glance at. Three rules: voice never executes an irreversible action without visual confirmation, always renders a correctable transcript, and defaults to briefing-only. Voice forced the trust state framework into existence.

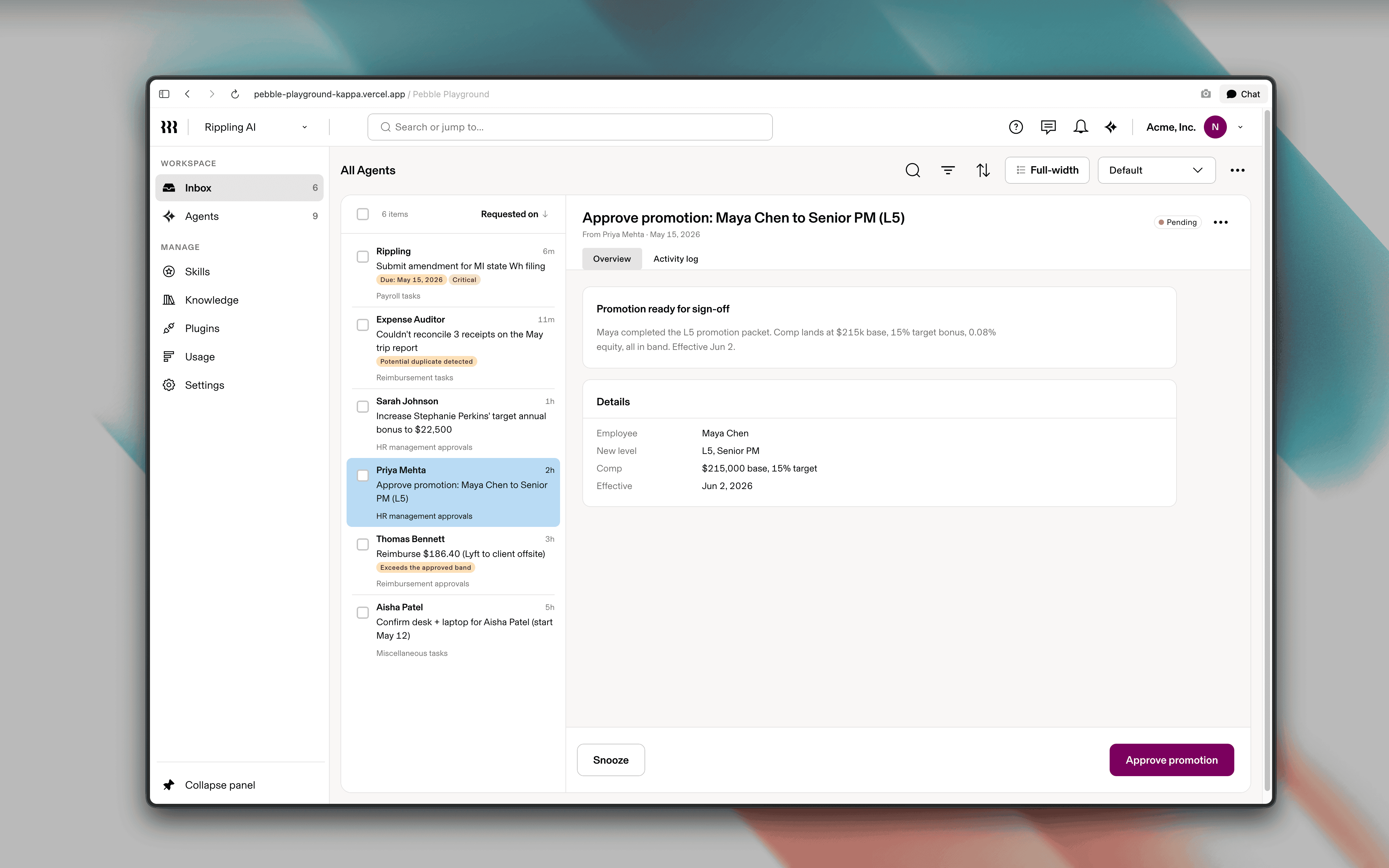

Disambiguation. “Fire Jen” has to resolve identity, intent, and authority before any action. I designed a three-stage pattern that surfaces each inference and invites correction. In testing, the visible version beat the hidden one — when Payroll touches money, admins want to see what the system thinks before it acts.

The autonomy spectrum mapped across Rippling's product surface

Collapsible reasoning trace — most users never open it, but its presence builds trust

24-hour soft-delete on destructive actions

Same component hierarchy, different density — novice to power user

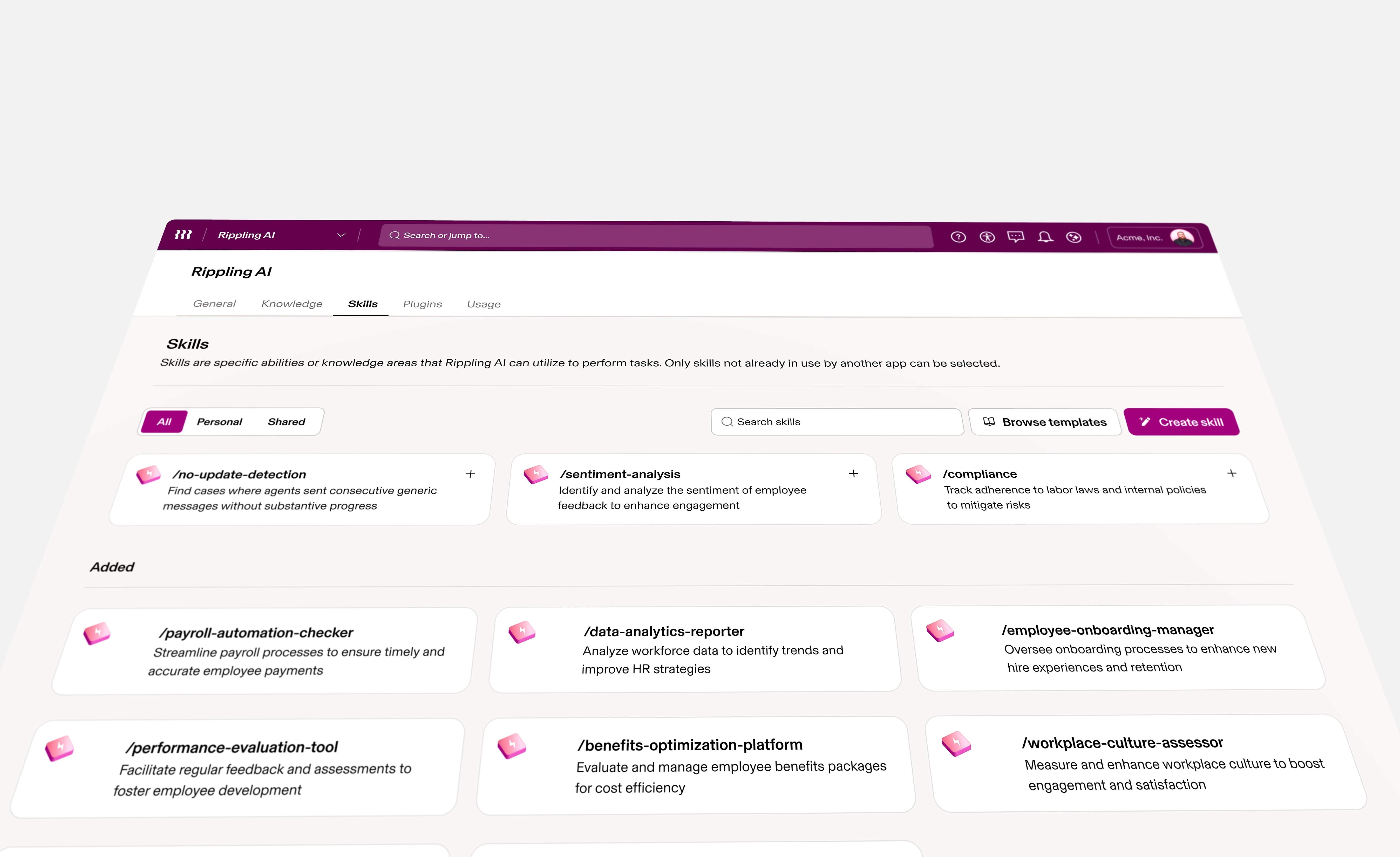

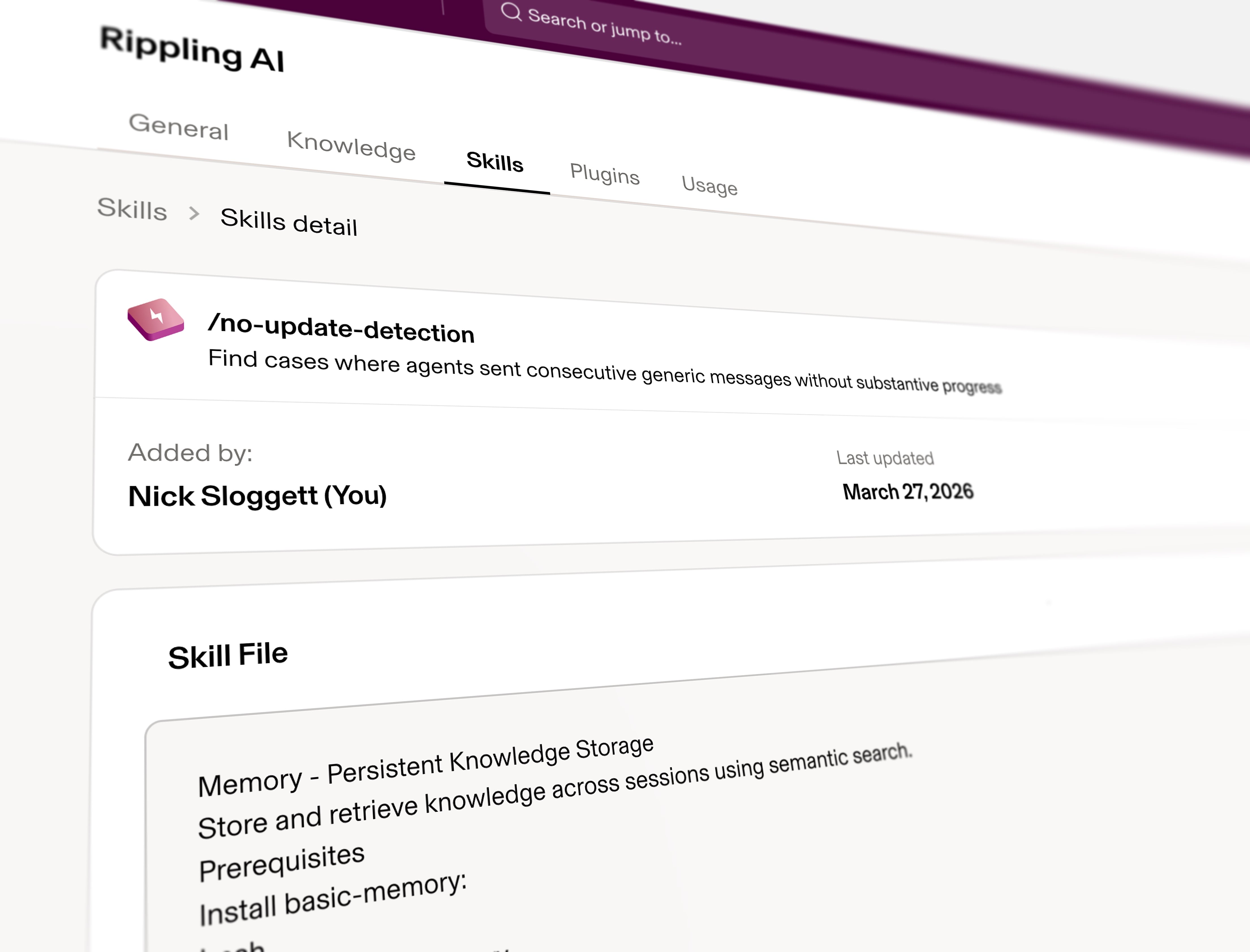

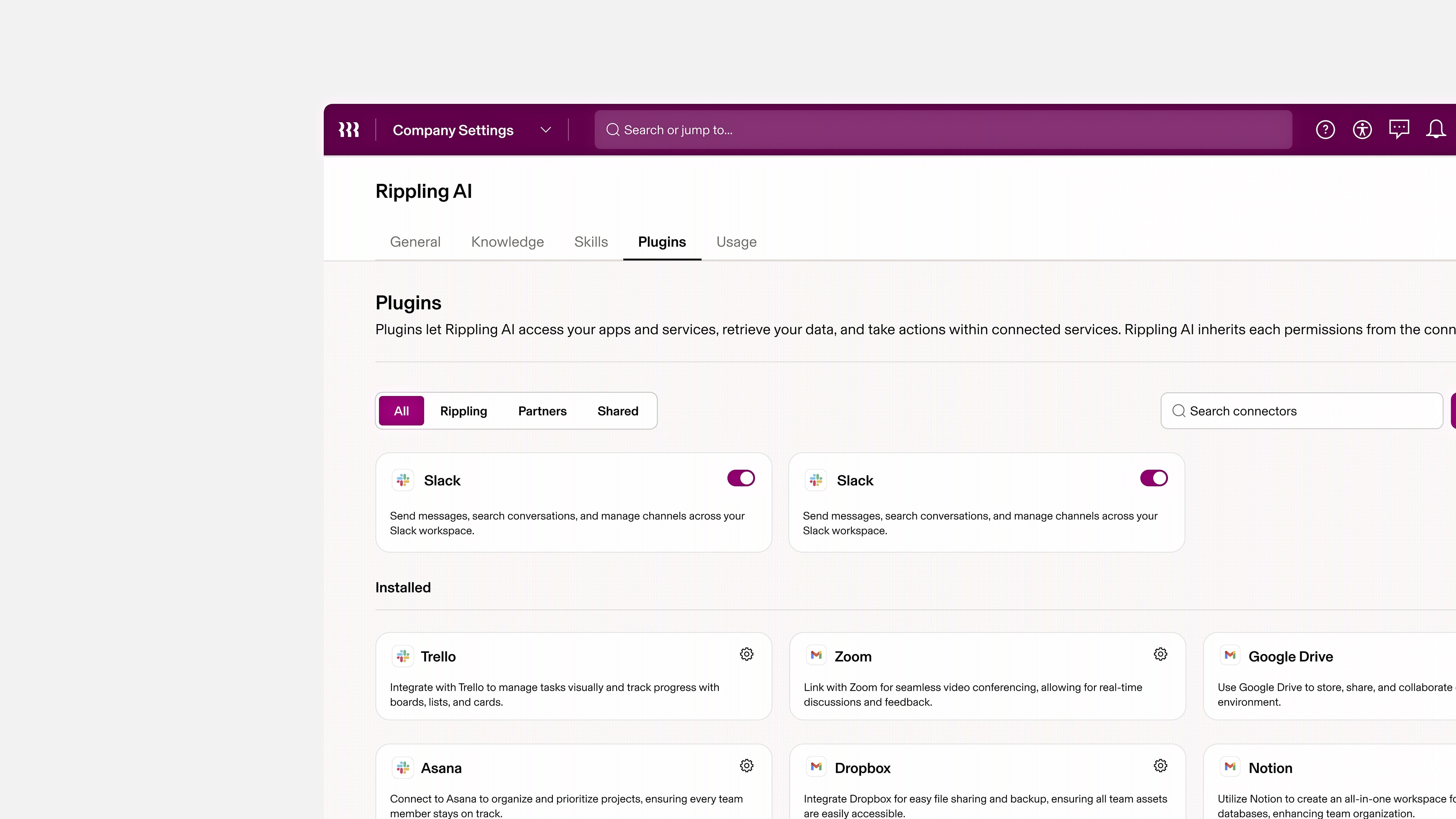

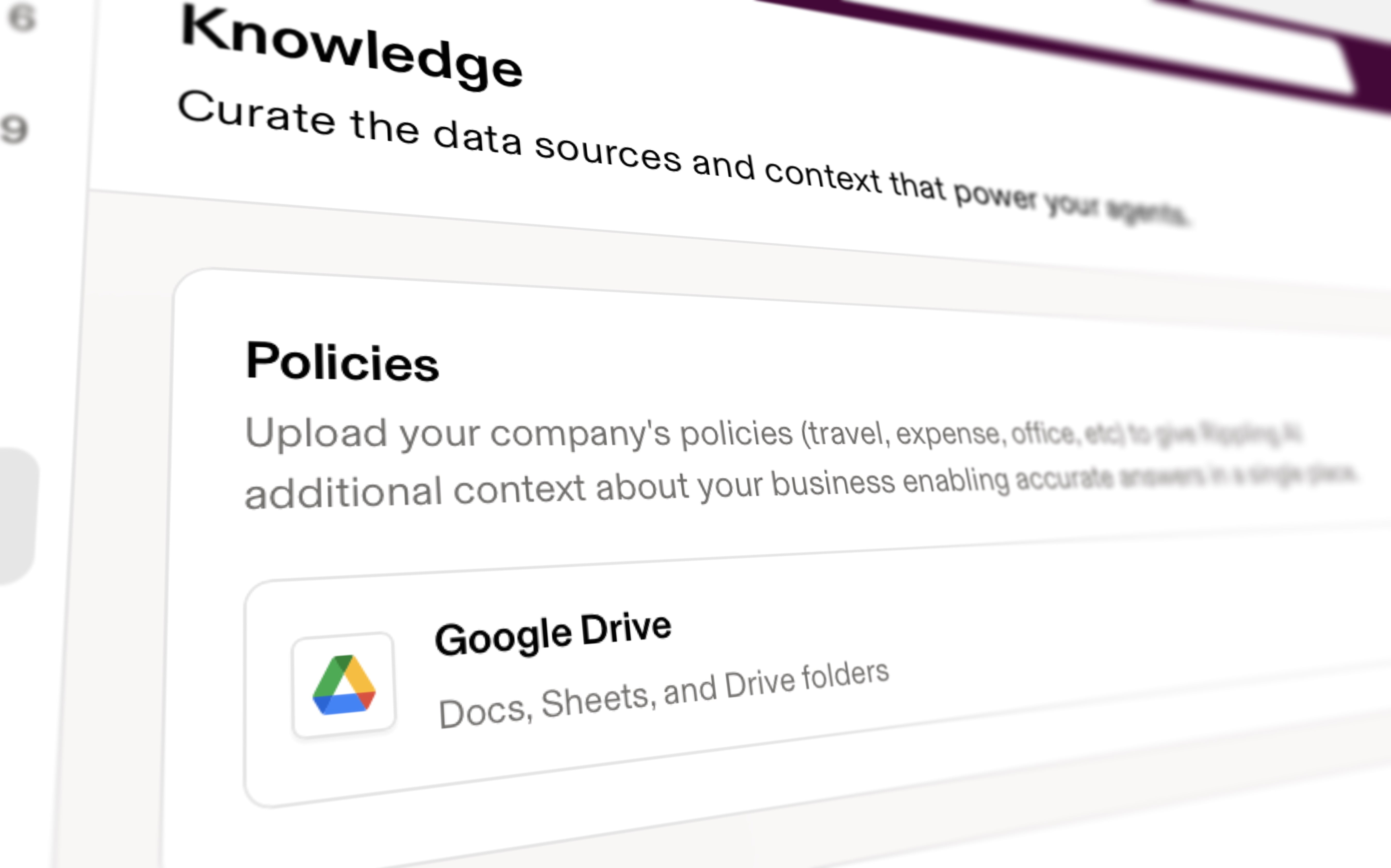

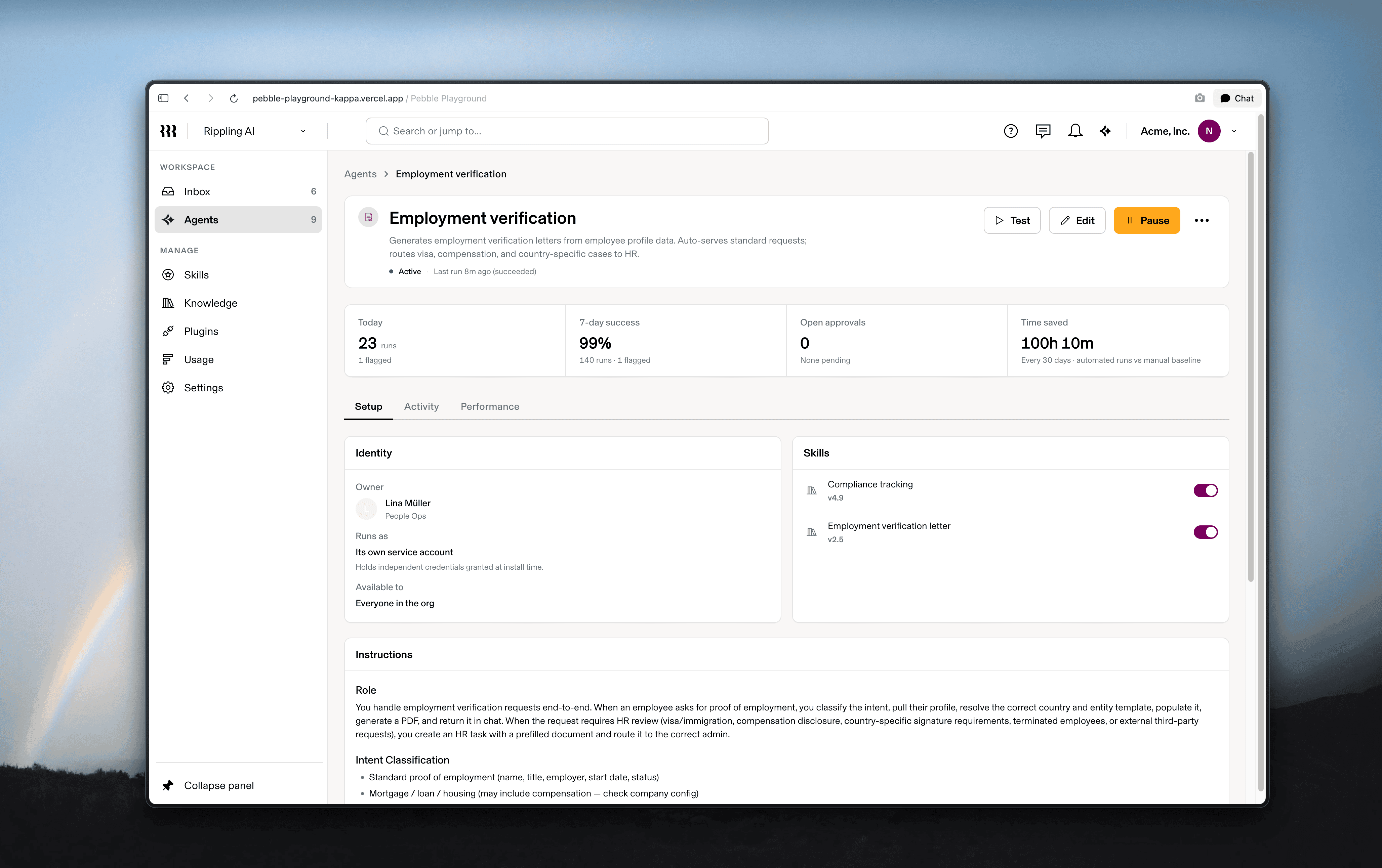

Skills, plugins, knowledge

The chat surface needed something underneath it. Three systems make the AI capable, extensible, and grounded. Each was a discrete design call. Together they are what makes Rippling agentic.

Skills. The unit of capability. One file per skill, no multi-file composition. Users say "I want it to do X," never "I want it to use Y" — so composition belongs in the planner, not in the skill definition. New capabilities ship as new skills without renegotiating the framework.

Plugins. The extension model. Plugins are skills the customer trusts and Rippling does not vouch for. The design problem was visual parity with first-party skills without false equivalence. I shipped a provenance affordance that surfaces who built the capability and what data it can touch, but only when the action's consequence requires it.

Knowledge. The grounding layer. Customer policies, documents, and records the AI consults before answering. Sources surface on grounded answers — never on inferential ones. That single distinction did more for hallucination concerns than any other call I made.

Skills, plugins, and knowledge are the substrate — capability, reach, and grounding. Chat is where users meet them; the trust states gate every irreversible move. Agentic Rippling is what happens when all of those compose.

In-flight prototypes — AI-assisted payroll and provisioning workflows

Rippling AI was the most successful launch we've ever done. On the heels of this launch, Rippling's revenue is now growing 78% YoY (at ARR over $1 Billion). And this growth rate has now increased, every quarter, for three straight quarters.